Recent changes in DSM7 have made guaranteeing being able to free up ports 80/443 for use by traefik rather difficult. Using a combination of several advanced techniques with docker networking we can circumvent this requirement once and for all with the macvlan driver.

You can find the necessary scripts and resources covered in this post in the gist linked below.

Overview

The macvlan driver gives each container on your system a "real" IP on your LAN. Why is this useful? In our case we need traefik (our reverse proxy) to bind to ports 80 and 443 but DSM already uses these ports when using the standard docker bridge. Only one service can bind to a port on a specific IP at a time.

By using macvlan to give traefik a real IP, we are able to have traefik bind to ports 80 and 443 and leave DSM alone. This negates the need to patch DSM every time there's an update and will be far less brittle in future.

However, if we put traefik into it's own network using macvlan then we have to solve another problem. How do the other containers on the system, which are most likely using the default bridge driver, route traffic between the Synology host and the "real" IP we gave to traefik?

The solution is in practice quite simple, but conceptually takes a bit to wrap your head around. We create a new docker bridge network - called frontend in the example code - and then add traefik to both the macvlan network and the frontend network. The final piece is to create a route so that packets from the Synology box end up where they're supposed to - for example ip route add 192.168.44.204/30 dev macvlan0.

The end result is traefik is able to serve traffic on its own IP on the LAN and route traffic to containers running on the Synology itself.

Configuration

This post was written and tested again DSM 7.1-42661 Update 4 in September 2022.

The first step is to identify the network adapter to create the macvlan interface against. If you've been using Virtual Machine manager your interfaces will start ovs_ethX or if you have a bonded network you'll see something bond0. In my case, ovs_eth0 was the interface. Use ip link to find yours.

alexktz@elrond:~$ ip link

1: lo: <LOOPBACK,UP,LOWER_UP> mtu 65536 qdisc noqueue state UNKNOWN mode DEFAULT group default qlen 1

link/loopback 00:00:00:00:00:00 brd 00:00:00:00:00:00

2: sit0@NONE: <NOARP> mtu 1480 qdisc noop state DOWN mode DEFAULT group default qlen 1

link/sit 0.0.0.0 brd 0.0.0.0

3: eth0: <BROADCAST,MULTICAST,SLAVE,UP,LOWER_UP> mtu 1500 qdisc pfifo_fast master ovs-system state UP mode DEFAULT group default qlen 1000

link/ether 00:11:32:e4:58:23 brd ff:ff:ff:ff:ff:ff

...

8: ovs_eth0: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1500 qdisc noqueue state UP mode DEFAULT group default qlen 1

link/ether 00:11:32:e4:58:23 brd ff:ff:ff:ff:ff:ffAdd the macvlan0 interface with the following command.

ip link add macvlan0 link ovs_eth0 type macvlan mode bridgeNext, figure out the range of IPs you'll be using with this website. In my example I used 192.168.44.204/30 which is the range 204-207.

ip addr add 192.168.4.204/30 dev macvlan0

ip link set macvlan0 upThe final step for the macvlan side of things is to add the route so that containers running on the Synology box know where to route the packets intended for traefik.

ip route add 192.168.44.204/30 dev macvlan0Now we move onto the docker side of the configuration. The first step here is to create the docker network for traefik to communicate with containers on the Synology host (not using the macvlan driver).

docker network create frontendThe rest of the configuration is handled in the docker-compose file. The full file is available in this gist.

Let's examine a few specific sections of that compose file. First, note that the traefik container is a member of two networks.

traefik:

image: traefik

container_name: tr

...

networks:

macvlan:

ipv4_address: 192.168.44.204

frontend:

restart: unless-stoppedYou can see that the traefik container has the IP 192.168.44.204. I created a wildcard DNS entry in my DNS server pointing to this IP (not the Synology box anymore where traefik used to live). traefik is also a member of the frontend network.

At the end of the compose file there is a section where we define the docker networks explicitly.

networks:

frontend:

external: true

macvlan:

name: macvlan

driver: macvlan

driver_opts:

parent: ovs_eth0

ipam:

config:

- subnet: 192.168.44.0/24

ip_range: 192.168.44.204/30

gateway: 192.168.44.254You'll need to adjust the values to suit your configuration but hopefully it's straightforward from here.

Next, let's examine the test nginx container. You'll see that it is a member only of the frontend network and that there are no ports exposed publicly. traefik handles all public facing traffic and routes it around under the hood.

nginxtest:

image: nginx

container_name: nginxtest

labels:

- traefik.http.routers.nginxtest.rule=Host(`test.domain.com`)

networks:

- frontendAutomating interface creation on reboot

The final piece of this puzzle is automate the creation of the macvlan interface on reboot.

First, create a script on the filesystem with the following contents (making sure to adjust the values as you need for your setup).

## get interface name (ovs_eth0 below) via ip link

ip link add macvlan0 link ovs_eth0 type macvlan mode bridge

##192.168.4.204/30 (204-207)

ip addr add 192.168.4.204/30 dev macvlan0

ip link set macvlan0 up

ip route add 192.168.44.204/30 dev macvlan0Make sure the script is executable chmod +x /path/to/script.sh.

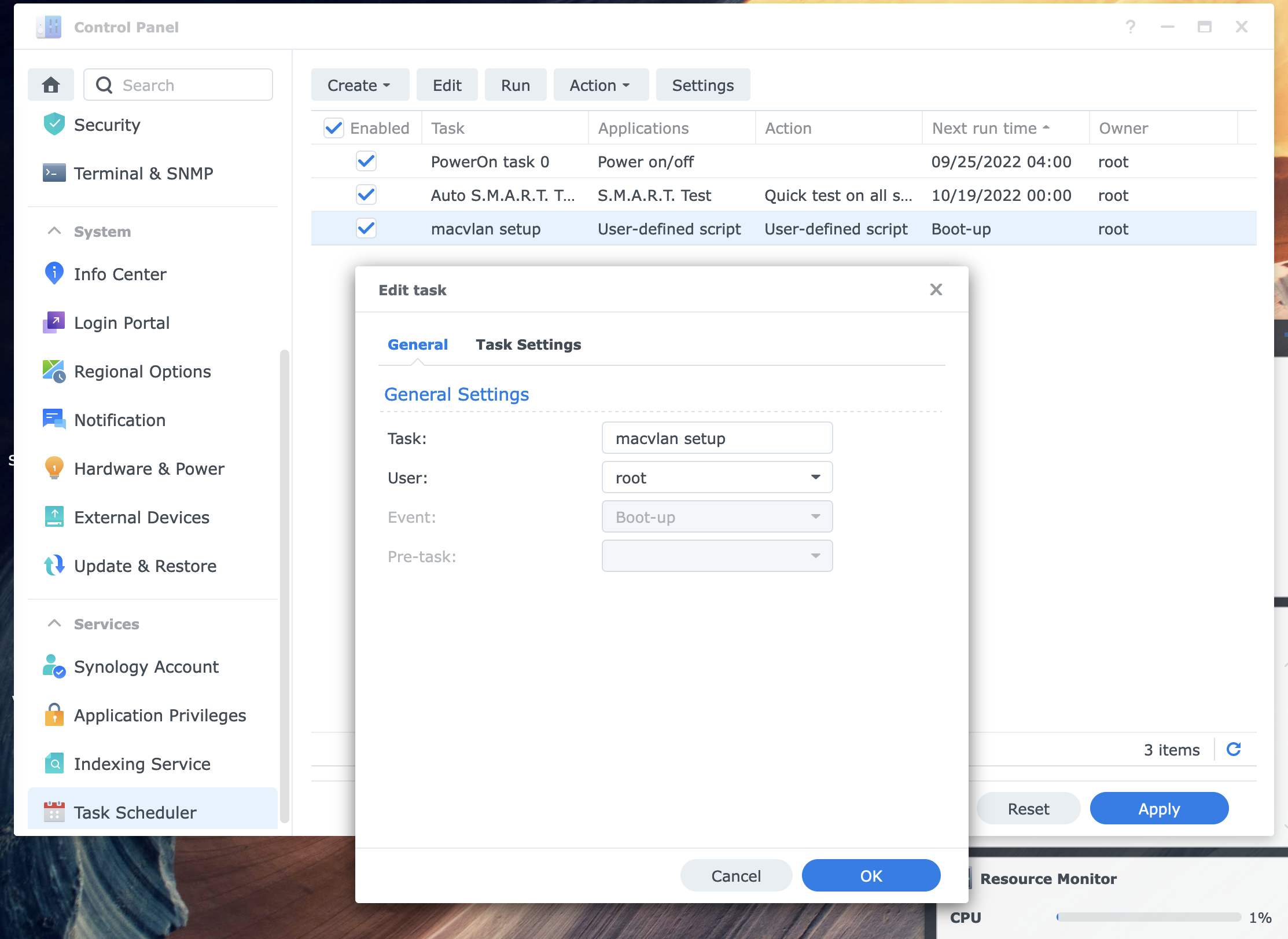

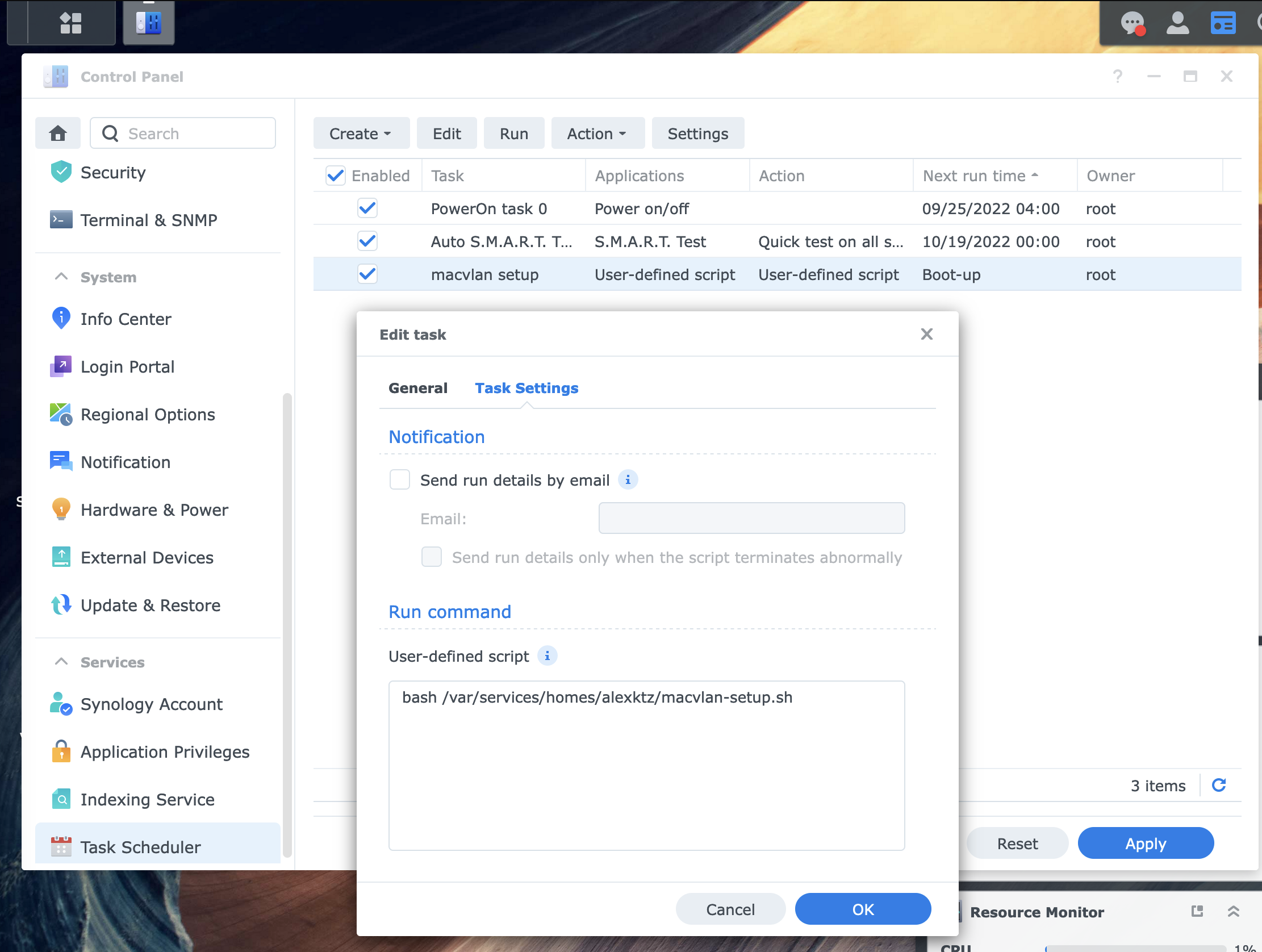

Next, using the Synology webUI add a scheduled task to execute this script as root on boot. It must be root or it will not have the required permissions to run.

To test, you can manually run the script from the webUI by clicking the run button on the task scheduler page. Use docker-compose to bring up your containers and verify it's working, then reboot and verify again (it may take 30-90 seconds after boot for all containers to come back up again depending on the app in question).

Summary

In conclusion then, we have configured traefik to run on it's own IP and route traffic around. As I said, in practice it's quite simple but conceptually requires some mental gymnastics to get your head around.

At the end of the gist, there are a collection links that were part of the research that went into this one.